Brain-computer interfaces, or BCIs, hold immense potential for individuals with a wide range of neurological conditions, but the road to implementation is long and nuanced for both the invasive and noninvasive versions of the technology.

Bin He of Carnegie Mellon University is highly driven to improve noninvasive BCIs, and his lab uses an innovative electroencephalogram (EEG) wearable to push the boundaries of what’s possible.

For the first time on record, the group successfully integrated a novel focused ultrasound stimulation to realize bidirectional BCI that both encodes and decodes brain waves using machine learning in a study with 25 human subjects. This work opens up a new avenue to significantly enhance not only the signal quality, but also, overall nonivasive BCI performance by stimulating targeted neural circuits.

Noninvasive BCI is lauded for its merits of being cheap, safe and virtually applicable to everyone. But because signals are recorded over the scalp versus inside the brain, low signal quality presents some limitations. The He group is exploring ways to improve the effectiveness of noninvasive BCIs and, over time, has used deep learning approaches to decode what an individual was thinking and then facilitate control of a cursor or robotic arm.

In their latest research, published in Nature Communications, the He group demonstrated that through precision noninvasive neuromodulation using focused ultrasound, the performance of a BCI could be improved for communication.

“This paper reports a breakthrough in noninvasive BCIs by integrating a novel focused ultrasound stimulation to realize bidirectional BCI functionality,” explained He, professor of biomedical engineering.

“Using a communication prosthetic, 25 human subjects spelled out phrases like ‘Carnegie Mellon’ using a BCI speller. Our findings showed that the addition of focused ultrasound neuromodulation significantly boosted the performance of EEG-based BCI. It also elevated theta neural oscillation that enhanced attention and led to enhanced BCI performance.”

For context, a BCI speller is a 6 x 6 visual motion aide containing the entire alphabet that is commonly used by nonspeakers to communicate. In He’s study, subjects donned an EEG cap and just by looking at the letters, were able to generate EEG signals to spell the desired words.

When a focused ultrasound beam was applied externally to the V5 area (part of the visual cortex) of the brain, the performance of the noninvasive BCI greatly improved among subjects. The neuromodulation-integrated BCI actively altered the engagement of neural circuits to maximize the BCI performance, compared to previous uses, which consisted of pure processing and decoding recorded signals.

“The BRAIN Initiative has supported more than 60 ultrasound projects since its inception. This unique application of noninvasive recording and modulation technologies expands the toolkit, with a potentially scalable impact on assisting people living with communication disabilities,” said Dr. Grace Hwang, program director at the Brain Research Through Advancing Innovative Neurotechnologies initiative (The BRAIN Initiative) at the National Institutes of Health (NIH).

Following this discovery, the He lab is further investigating the merits and applications of focused ultrasound neuromodulation to the brain, beyond the visual system, to enhance noninvasive BCIs. They also aim to develop a more compact-focused ultrasound neuromodulation device for better integration with EEG-based BCIs, and to integrate AI to continue to enhance the overall system performance.

“This is my lifelong interest, and I will never give up,” emphasized He. “Working to improve noninvasive technology is difficult, but I strongly believe that if we can find a way to make it work, everyone will benefit. I will keep working, and someday, noninvasive lifesaving technology will be available for every household.”

More information: Joshua Kosnoff et al, Transcranial focused ultrasound to V5 enhances human visual motion brain-computer interface by modulating feature-based attention, Nature Communications (2024). DOI: 10.1038/s41467-024-48576-8

News

Why More People in Their 30s Are Suddenly Getting Colon Cancer

A major Swiss study found that colorectal cancer is becoming increasingly common in adults under 50, even as rates decline in older age groups. Researchers in Switzerland have identified a concerning trend: while colorectal [...]

Researchers Compare MS Models to Human Tissue in Search for Better Therapies

Researchers identified key differences between two widely used multiple sclerosis models, showing how each can better study myelin damage, immune responses, and repair. The findings may improve efforts to develop treatments that restore lost [...]

Scientists Discover Genetic “Off Switch” That Supercharges CAR T Cells Against Cancer

A new study reveals a possible way to make CAR T-cell therapy more durable and effective by targeting a single gene-regulating protein. CAR T-cell therapy is widely seen as a breakthrough in personalized cancer [...]

New Vitamin B12-Based Therapy Could Change How Brain Cancer Is Treated

Researchers have identified a vitamin B12–based compound that appears capable of crossing the blood–brain barrier and selectively accumulating in glioblastoma tissue. For decades, one of the biggest problems in brain cancer treatment has had [...]

Simple Fiber Supplement Cuts Knee Arthritis Pain in Just 6 Weeks, Study Finds

A daily inulin supplement may help reduce knee osteoarthritis pain while revealing a possible link between gut health, muscle function, and pain sensitivity. For millions of people living with knee osteoarthritis, managing chronic pain [...]

This Common Vitamin May Help Stop Prediabetes From Turning Into Diabetes

Vitamin D may help prevent type 2 diabetes in people with specific genetic variations, offering a possible path toward personalized diabetes prevention. More than 40% of U.S. adults have prediabetes, a condition in which [...]

Ebola, hantavirus: Is the world prepared for the next pandemic?

Funding cuts to health research and a growing antivaccine movement are making it harder than ever to respond to viruses. The World Health Organization (WHO) has declared that an Ebola outbreak in Uganda and [...]

May 2026 Healthcare News and Trends: Market Signals That Matter

Artificial intelligence is dominating headlines, telehealth has settled into a new normal, and digital health continues to promise transformation. However, much of what is being discussed in healthcare today reflects potential rather than reality. [...]

Scientists Rewire Donor Stem Cells To Outsmart Aggressive Blood Cancers

Researchers have tested a gene-edited stem cell transplant designed to shield healthy blood-forming cells from powerful cancer-targeting immunotherapies. For patients with highly aggressive blood cancers, stem cell transplantation can offer a rare chance at [...]

Recent Digital Health Trends, Insights and News – May 2026

Last month marked continued progress as digital health moves into its next phase — from AI expanding into drug discovery and core infrastructure to new federal pathways accelerating device access and home-based care. Together, [...]

Cancer Mystery Solved: Scientists Discover How Melanoma Becomes “Immortal”

Scientists have uncovered a previously overlooked mechanism that may help melanoma cells become effectively “immortal.” Cancer cells face a major problem before they can become deadly: They have to figure out how to stop [...]

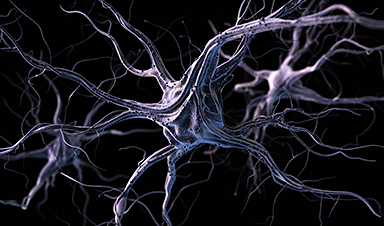

How Visual Neurons Organize Thousands of Synaptic Inputs

Summary: A new study uncovered the organizational rules that determine how neurons in the primary visual cortex process information. By imaging both the cell bodies (soma) and the individual synapses (on dendritic spines) of [...]

Scientists Just Found a Surprising Way To Destroy “Forever Chemicals”

Scientists have uncovered a new mechanism that may help break down highly persistent PFAS pollutants. PFAS have earned the nickname “forever chemicals” for a reason. These industrial compounds are so chemically durable that they [...]

Scientists Discover Cheap Material That Kills Deadly Superbugs

A new sulfur-rich antimicrobial polymer shows strong effectiveness against fungal and bacterial pathogens and may offer an affordable solution to antimicrobial resistance. Antimicrobial resistance is creating growing challenges for both healthcare and food production, [...]

What to Know About Cicada, or BA.3.2, the Latest SARS-CoV-2 Variant Under Monitoring

Like periodical cicadas, the insects for which it is nicknamed, SARS-CoV-2 Omicron subvariant BA.3.2 is only just beginning to emerge after lying low for an extended period since it first appeared. Although it was [...]

Scientists Say This Simple Supplement May Actually Reverse Heart Disease

Scientists in Japan say a common supplement may actually help “unclog” certain diseased heart arteries from the inside out. A simple food supplement sold in Japan may have helped reverse a dangerous form of [...]