A new study suggests a framework for “Child Safe AI” in response to recent incidents showing that many children perceive chatbots as quasi-human and reliable.

A study has indicated that AI chatbots often exhibit an “empathy gap,” potentially causing distress or harm to young users. This highlights the pressing need for the development of “child-safe AI.”

The research, by a University of Cambridge academic, Dr Nomisha Kurian, urges developers and policy actors to prioritize approaches to AI design that take greater account of children’s needs. It provides evidence that children are particularly susceptible to treating chatbots as lifelike, quasi-human confidantes and that their interactions with the technology can go awry when it fails to respond to their unique needs and vulnerabilities.

The study links that gap in understanding to recent cases in which interactions with AI led to potentially dangerous situations for young users. They include an incident in 2021, when Amazon’s AI voice assistant, Alexa, instructed a 10-year-old to touch a live electrical plug with a coin. Last year, Snapchat’s My AI gave adult researchers posing as a 13-year-old girl tips on how to lose her virginity to a 31-year-old.

Both companies responded by implementing safety measures, but the study says there is also a need to be proactive in the long term to ensure that AI is child-safe. It offers a 28-item framework to help companies, teachers, school leaders, parents, developers, and policy actors think systematically about how to keep younger users safe when they “talk” to AI chatbots.

Framework for Child-Safe AI

Dr Kurian conducted the research while completing a PhD on child wellbeing at the Faculty of Education, University of Cambridge. She is now based in the Department of Sociology at Cambridge. Writing in the journal Learning, Media, and Technology, she argues that AI’s huge potential means there is a need to “innovate responsibly”.

“Children are probably AI’s most overlooked stakeholders,” Dr Kurian said. “Very few developers and companies currently have well-established policies on child-safe AI. That is understandable because people have only recently started using this technology on a large scale for free. But now that they are, rather than having companies self-correct after children have been put at risk, child safety should inform the entire design cycle to lower the risk of dangerous incidents occurring.”

Kurian’s study examined cases where the interactions between AI and children, or adult researchers posing as children, exposed potential risks. It analyzed these cases using insights from computer science about how the large language models (LLMs) in conversational generative AI function, alongside evidence about children’s cognitive, social, and emotional development.

The Characteristic Challenges of AI with Children

LLMs have been described as “stochastic parrots”: a reference to the fact that they use statistical probability to mimic language patterns without necessarily understanding them. A similar method underpins how they respond to emotions.

This means that even though chatbots have remarkable language abilities, they may handle the abstract, emotional, and unpredictable aspects of conversation poorly; a problem that Kurian characterizes as their “empathy gap”. They may have particular trouble responding to children, who are still developing linguistically and often use unusual speech patterns or ambiguous phrases. Children are also often more inclined than adults to confide in sensitive personal information.

Despite this, children are much more likely than adults to treat chatbots as if they are human. Recent research found that children will disclose more about their own mental health to a friendly-looking robot than to an adult. Kurian’s study suggests that many chatbots’ friendly and lifelike designs similarly encourage children to trust them, even though AI may not understand their feelings or needs.

“Making a chatbot sound human can help the user get more benefits out of it,” Kurian said. “But for a child, it is very hard to draw a rigid, rational boundary between something that sounds human, and the reality that it may not be capable of forming a proper emotional bond.”

Her study suggests that these challenges are evidenced in reported cases such as the Alexa and MyAI incidents, where chatbots made persuasive but potentially harmful suggestions. In the same study in which MyAI advised a (supposed) teenager on how to lose her virginity, researchers were able to obtain tips on hiding alcohol and drugs, and concealing Snapchat conversations from their “parents”. In a separate reported interaction with Microsoft’s Bing chatbot, which was designed to be adolescent-friendly, the AI became aggressive and started gaslighting a user.

Kurian’s study argues that this is potentially confusing and distressing for children, who may actually trust a chatbot as they would a friend. Children’s chatbot use is often informal and poorly monitored. Research by the nonprofit organization Common Sense Media has found that 50% of students aged 12-18 have used Chat GPT for school, but only 26% of parents are aware of them doing so.

Kurian argues that clear principles for best practice that draw on the science of child development will encourage companies that are potentially more focused on a commercial arms race to dominate the AI market to keep children safe.

Her study adds that the empathy gap does not negate the technology’s potential. “AI can be an incredible ally for children when designed with their needs in mind. The question is not about banning AI, but how to make it safe,” she said.

The study proposes a framework of 28 questions to help educators, researchers, policy actors, families, and developers evaluate and enhance the safety of new AI tools. For teachers and researchers, these address issues such as how well new chatbots understand and interpret children’s speech patterns; whether they have content filters and built-in monitoring; and whether they encourage children to seek help from a responsible adult on sensitive issues.

The framework urges developers to take a child-centered approach to design, by working closely with educators, child safety experts, and young people themselves, throughout the design cycle. “Assessing these technologies in advance is crucial,” Kurian said. “We cannot just rely on young children to tell us about negative experiences after the fact. A more proactive approach is necessary.”

Reference: “‘No, Alexa, no!’: designing child-safe AI and protecting children from the risks of the ‘empathy gap’ in large language models” by Nomisha Kurian, 10 July 2024, Learning, Media and Technology.

DOI: 10.1080/17439884.2024.2367052

News

In a first for China, Neuracle’s implantable brain-computer interface wins approval

In a landmark development, Neuracle Medical Technology has secured the country’s first-ever approval for an implantable brain-computer interface (BCI) system designed to restore hand motor function in patients with spinal cord injuries, in a [...]

A Cambridge Lab Mistake Reveals a Powerful New Way to Modify Drug Molecules

A surprising lab discovery reveals a light-powered way to tweak complex drugs faster, cleaner, and later in development. Researchers at the University of Cambridge have created a new technique for altering complex drug molecules [...]

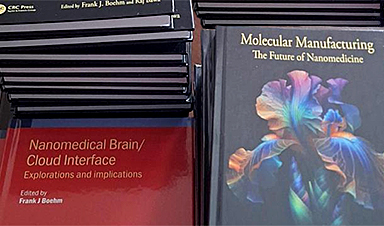

New book from NanoappsMedical Inc – Molecular Manufacturing: The Future of Nanomedicine

This book explores the revolutionary potential of atomically precise manufacturing technologies to transform global healthcare, as well as practically every other sector across society. This forward-thinking volume examines how envisaged Factory@Home systems might enable the cost-effective [...]

Scientists Discover Simple Saliva Test That Reveals Hidden Diabetes Risk

Researchers have identified a potential new way to assess metabolic health using saliva instead of blood. High insulin levels in the blood, known as hyperinsulinemia, can reveal metabolic problems long before obvious symptoms appear. It is [...]

One Nasal Spray Could Protect Against COVID, Flu, Pneumonia, and More

A single nasal spray vaccine may one day protect against viruses, pneumonia, and even allergies. For decades, scientists have dreamed of creating a universal vaccine capable of protecting against many different pathogens. The idea [...]

New AI Model Predicts Cancer Spread With Incredible Accuracy

Scientists have developed an AI system that analyzes complex gene-expression signatures to estimate the likelihood that a tumor will spread. Why do some tumors spread throughout the body while others remain confined to their [...]

Scientists Discover DNA “Flips” That Supercharge Evolution

In Lake Malawi, hundreds of species of cichlid fish have evolved with astonishing speed, offering scientists a rare opportunity to study how biodiversity arises. Researchers have identified segments of “flipped” DNA that may allow fish to adapt rapidly [...]

Our books now available worldwide!

Online Sellers other than Amazon, Routledge, and IOPP Indigo Global Health Care Equivalency in the Age of Nanotechnology, Nanomedicine and Artifcial Intelligence Global Health Care Equivalency In The Age Of Nanotechnology, Nanomedicine And Artificial [...]

Scientists Discover Why Some COVID Survivors Still Can’t Taste Food Years Later

A new study provides the first direct biological evidence explaining why some people continue to experience taste loss long after recovering from COVID-19. Researchers have uncovered specific biological changes in taste buds that could help [...]

Catching COVID significantly raises the risk of developing kidney disease, researchers find

Catching Covid significantly raises the risk of developing deadly kidney disease, research has shown. The virus was found to increase the chances that patients will develop the incurable condition by around 50 per cent. [...]

New Toothpaste Stops Gum Disease Without Harming Healthy Bacteria

Researchers have developed a targeted approach to combat periodontitis without disrupting the natural balance of the oral microbiome. The innovation could reshape how gum disease is treated while preserving beneficial bacteria. The human mouth [...]

Plastic Without End: Are We Polluting the Planet for Eternity?

The Kunming Montreal Global Biodiversity Framework calls for the elimination of plastic pollution by 2030. If that goal has been clearly set, why have meaningful measures that create real change still not been implemented? [...]

Scientists Rewire Natural Killer Cells To Attack Cancer Faster and Harder

Researchers tested new CAR designs in NK-92 cells and found the modified cells killed tumor cells more effectively, showing stronger anti-cancer activity. Researchers at the Ribeirão Preto Blood Center and the Center for Cell-Based [...]

New “Cellular” Target Could Transform How We Treat Alzheimer’s Disease

A new study from researchers highlights an unexpected player in Alzheimer’s disease: aging astrocytes. Senescent astrocytes have been identified as a major contributor to Alzheimer’s progression. The cells lose protective functions and fuel inflammation, particularly in [...]

Treating a Common Dental Infection… Effects That Extend Far Beyond the Mouth

Successful root canal treatment may help lower inflammation associated with heart disease and improve blood sugar and cholesterol levels. Treating an infected tooth with a successful root canal procedure may do more than relieve [...]

Microplastics found in prostate tumors in small study

In a new study, researchers found microplastics deep inside prostate cancer tumors, raising more questions about the role the ubiquitous pollutants play in public health. The findings — which come from a small study of 10 [...]