MIT researchers have introduced an efficient reinforcement learning algorithm that enhances AI's decision-making in complex scenarios, such as city traffic control.

By strategically selecting optimal tasks for training, the algorithm achieves significantly improved performance with far less data, offering a 50x boost in efficiency. This method not only saves time and resources but also paves the way for more effective AI applications in real-world settings.

AI Decision-Making

Across fields like robotics, medicine, and political science, researchers are working to train AI systems to make meaningful and impactful decisions. For instance, an AI system designed to manage traffic in a congested city could help drivers reach their destinations more quickly while enhancing safety and sustainability.

However, teaching AI to make effective decisions is a complex challenge.

Challenges in Reinforcement Learning

Reinforcement learning models, the foundation of many AI decision-making systems, often struggle when confronted with even slight changes in the tasks they are trained for. For example, in traffic management, a model might falter when handling intersections with varying speed limits, lane configurations, or traffic patterns.

To boost the reliability of reinforcement learning models for complex tasks with variability, MIT researchers have introduced a more efficient algorithm for training them.

Strategic Task Selection in AI Training

The algorithm strategically selects the best tasks for training an AI agent so it can effectively perform all tasks in a collection of related tasks. In the case of traffic signal control, each task could be one intersection in a task space that includes all intersections in the city.

By focusing on a smaller number of intersections that contribute the most to the algorithm's overall effectiveness, this method maximizes performance while keeping the training cost low.

Enhancing AI Efficiency With a Simple Algorithm

The researchers found that their technique was between five and 50 times more efficient than standard approaches on an array of simulated tasks. This gain in efficiency helps the algorithm learn a better solution in a faster manner, ultimately improving the performance of the AI agent.

"We were able to see incredible performance improvements, with a very simple algorithm, by thinking outside the box. An algorithm that is not very complicated stands a better chance of being adopted by the community because it is easier to implement and easier for others to understand," says senior author Cathy Wu, the Thomas D. and Virginia W. Cabot Career Development Associate Professor in Civil and Environmental Engineering (CEE) and the Institute for Data, Systems, and Society (IDSS), and a member of the Laboratory for Information and Decision Systems (LIDS).

She is joined on the paper by lead author Jung-Hoon Cho, a CEE graduate student; Vindula Jayawardana, a graduate student in the Department of Electrical Engineering and Computer Science (EECS); and Sirui Li, an IDSS graduate student. The research will be presented at the Conference on Neural Information Processing Systems.

Balancing Training Approaches

To train an algorithm to control traffic lights at many intersections in a city, an engineer would typically choose between two main approaches. She can train one algorithm for each intersection independently, using only that intersection's data, or train a larger algorithm using data from all intersections and then apply it to each one.

But each approach comes with its share of downsides. Training a separate algorithm for each task (such as a given intersection) is a time-consuming process that requires an enormous amount of data and computation, while training one algorithm for all tasks often leads to subpar performance.

Wu and her collaborators sought a sweet spot between these two approaches.

Advantages of Model-Based Transfer Learning

For their method, they choose a subset of tasks and train one algorithm for each task independently. Importantly, they strategically select individual tasks that are most likely to improve the algorithm's overall performance on all tasks.

They leverage a common trick from the reinforcement learning field called zero-shot transfer learning, in which an already trained model is applied to a new task without being further trained. With transfer learning, the model often performs remarkably well on the new neighbor task.

"We know it would be ideal to train on all the tasks, but we wondered if we could get away with training on a subset of those tasks, apply the result to all the tasks, and still see a performance increase," Wu says.

MBTL Algorithm: Optimizing Task Selection

To identify which tasks they should select to maximize expected performance, the researchers developed an algorithm called Model-Based Transfer Learning (MBTL).

The MBTL algorithm has two pieces. For one, it models how well each algorithm would perform if it were trained independently on one task. Then it models how much each algorithm's performance would degrade if it were transferred to each other task, a concept known as generalization performance.

Explicitly modeling generalization performance allows MBTL to estimate the value of training on a new task.

MBTL does this sequentially, choosing the task which leads to the highest performance gain first, then selecting additional tasks that provide the biggest subsequent marginal improvements to overall performance.

Since MBTL only focuses on the most promising tasks, it can dramatically improve the efficiency of the training process.

Implications for Future AI Development

When the researchers tested this technique on simulated tasks, including controlling traffic signals, managing real-time speed advisories, and executing several classic control tasks, it was five to 50 times more efficient than other methods.

This means they could arrive at the same solution by training on far less data. For instance, with a 50x efficiency boost, the MBTL algorithm could train on just two tasks and achieve the same performance as a standard method which uses data from 100 tasks.

"From the perspective of the two main approaches, that means data from the other 98 tasks was not necessary or that training on all 100 tasks is confusing to the algorithm, so the performance ends up worse than ours," Wu says.

With MBTL, adding even a small amount of additional training time could lead to much better performance.

In the future, the researchers plan to design MBTL algorithms that can extend to more complex problems, such as high-dimensional task spaces. They are also interested in applying their approach to real-world problems, especially in next-generation mobility systems.

Reference: "Model-Based Transfer Learning for Contextual Reinforcement Learning" by Jung-Hoon Cho, Vindula Jayawardana, Sirui Li and Cathy Wu, 21 November 2024, Computer Science > Machine Learning.

arXiv:2408.04498

The research is funded, in part, by a National Science Foundation CAREER Award, the Kwanjeong Educational Foundation PhD Scholarship Program, and an Amazon Robotics PhD Fellowship.

News

CEO of America’s largest public hospital system is ready to replace radiologists with AI

The chief executive of America’s largest public hospital system says he is prepared to start replacing radiologists with artificial intelligence in some circumstances, once the regulatory landscape catches up. Mitchell H. Katz, MD, president [...]

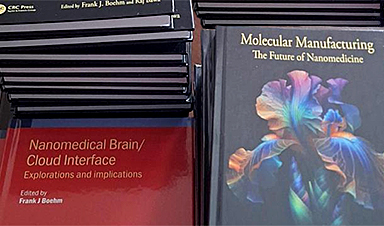

Our books now available worldwide!

Online Sellers other than Amazon, Routledge, and IOPP Indigo Global Health Care Equivalency in the Age of Nanotechnology, Nanomedicine and Artifcial Intelligence Global Health Care Equivalency In The Age Of Nanotechnology, Nanomedicine And Artificial [...]

Study finds higher heart disease risk in long COVID patients

People with long COVID are at increased risk of developing cardiovascular disease, according to a new study from Karolinska Institutet published in eClinicalMedicine. The results show that the risk of conditions such as cardiac arrhythmias [...]

The Corona variant Cicada is here – we know that

Online and on social media, reports are piling up about a new Sars-Cov-2 variant that is currently on the rise: BA.3.2, also known as Cicada. That's what it's all about: The Omicron variant BA.3.2, [...]

A Simple Blood Test Could Predict Dementia Risk 25 Years Early

A single blood marker may quietly signal dementia risk decades in advance. Scientists at the University of California, San Diego, have identified a blood signal that could forecast dementia risk decades before symptoms begin. Their [...]

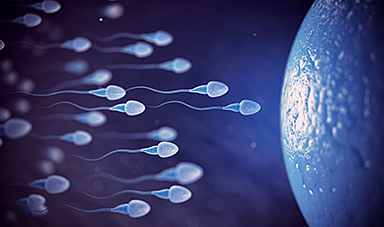

Sperm Get Lost in Space and Scientists Finally Know Why

Having a baby in space may be far more complicated than expected, as new research shows sperm struggle to find their way in microgravity. Starting a family beyond Earth could be more complicated than [...]

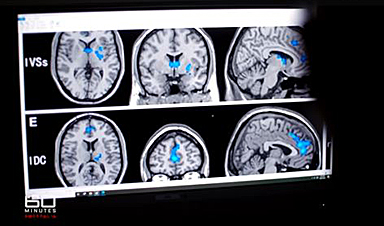

Digital Dementia – Brain fog and disassociation from being chronically online

New medical evidence, featured on 60 Minutes Australia, indicates excessive screen time is causing "digital dementia" in young Australians, with brain scans showing physical shrinkage and damage. Experts warn that high device usage (6-8 hours [...]

A new, highly mutated COVID variant called ‘Cicada’ is spreading in the US.

BA.3.2, a heavily mutated new COVID-19 variant which may be better able to escape immunity from vaccines or prior infection, is now spreading in the United States. Although COVID cases are currently low nationally, [...]

Molecular Manufacturing: The Future of Nanomedicine – New book from NanoappsMedical Inc.

This book explores the revolutionary potential of atomically precise manufacturing technologies to transform global healthcare, as well as practically every other sector across society. This forward-thinking volume examines how envisaged Factory@Home systems might enable the cost-effective [...]

Ancient bacteria strain discovered in ice cave is resistant to some modern antibiotics

In the depths of Scarisoara cave in Romania sits one of the world’s biggest underground glaciers, a monumental slab of ice the size of roughly 40 Olympic swimming pools that began to form around [...]

Scientists Identify “Good” Bacteria That May Prevent Long COVID

According to the WHO, about 6% of people worldwide who get COVID-19, roughly 400 million people, later develop a long-lasting form of the illness. That shows the condition remains a significant public health challenge. In [...]

New book from Nanoappsmedical Inc. – Global Health Care Equivalency

A new book by Frank Boehm, NanoappsMedical Inc. Founder. This groundbreaking volume explores the vision of a Global Health Care Equivalency (GHCE) system powered by artificial intelligence and quantum computing technologies, operating on secure [...]

RNA Recycling Extends Lifespan

Summary: Researchers discovered a biological “trash disposal” mechanism that directly controls how fast we age. While circular RNA has long been known to accumulate in cells as we get older, this study proves for the [...]

Cancer’s Deadly Paradox: How Tumors Break Their Own DNA To Keep Growing

Cancer’s strongest gene switches push DNA into damaging overdrive, creating repeated breaks and repairs that may fuel tumor evolution while exposing possible therapeutic weak spots. A new study indicates that cancer can harm its own genetic [...]

NanoMedical Brain/Cloud Interface – Explorations and Implications. A new book from Frank Boehm

New book from Frank Boehm, NanoappsMedical Inc Founder: This book explores the future hypothetical possibility that the cerebral cortex of the human brain might be seamlessly, safely, and securely connected with the Cloud via [...]

Ryugu asteroid samples contain all DNA and RNA building blocks, bolstering origin-of-life theories

All the essential ingredients to make the DNA and RNA underpinning life on Earth have been discovered in samples collected from the asteroid Ryugu, scientists said Monday. The discovery comes after these building blocks [...]