Highlighting the absence of evidence for the controllability of AI, Dr. Yampolskiy warns of the existential risks involved and advocates for a cautious approach to AI development, with a focus on safety and risk minimization.

There is no current evidence that AI can be controlled safely, according to an extensive review, and without proof that AI can be controlled, it should not be developed, a researcher warns.

Despite the recognition that the problem of AI control may be one of the most important problems facing humanity, it remains poorly understood, poorly defined, and poorly researched, Dr. Roman V. Yampolskiy explains.

In his upcoming book, AI: Unexplainable, Unpredictable, Uncontrollable, AI Safety expert Dr. Yampolskiy looks at the ways that AI has the potential to dramatically reshape society, not always to our advantage.

He explains: “We are facing an almost guaranteed event with the potential to cause an existential catastrophe. No wonder many consider this to be the most important problem humanity has ever faced. The outcome could be prosperity or extinction, and the fate of the universe hangs in the balance.”

Uncontrollable superintelligence

Dr. Yampolskiy has carried out an extensive review of AI scientific literature and states he has found no proof that AI can be safely controlled – and even if there are some partial controls, they would not be enough.

He explains: “Why do so many researchers assume that AI control problem is solvable? To the best of our knowledge, there is no evidence for that, no proof. Before embarking on a quest to build a controlled AI, it is important to show that the problem is solvable.

“This, combined with statistics that show the development of AI superintelligence is an almost guaranteed event, shows we should be supporting a significant AI safety effort.”

He argues our ability to produce intelligent software far outstrips our ability to control or even verify it. After a comprehensive literature review, he suggests advanced intelligent systems can never be fully controllable and so will always present a certain level of risk regardless of the benefit they provide. He believes it should be the goal of the AI community to minimize such risk while maximizing potential benefits.

What are the obstacles?

AI (and superintelligence), differ from other programs by its ability to learn new behaviors, adjust its performance, and act semi-autonomously in novel situations.

One issue with making AI ‘safe’ is that the possible decisions and failures by a superintelligent being as it becomes more capable is infinite, so there are an infinite number of safety issues. Simply predicting the issues not be possible and mitigating against them in security patches may not be enough.

At the same time, Yampolskiy explains, AI cannot explain what it has decided, and/or we cannot understand the explanation given as humans are not smart enough to understand the concepts implemented. If we do not understand AI’s decisions and we only have a ‘black box’, we cannot understand the problem and reduce the likelihood of future accidents.

For example, AI systems are already being tasked with making decisions in healthcare, investing, employment, banking and security, to name a few. Such systems should be able to explain how they arrived at their decisions, particularly to show that they are bias-free.

Yampolskiy explains: “If we grow accustomed to accepting AI’s answers without an explanation, essentially treating it as an Oracle system, we would not be able to tell if it begins providing wrong or manipulative answers.”

Controlling the uncontrollable

As the capability of AI increases, its autonomy also increases but our control over it decreases, Yampolskiy explains, and increased autonomy is synonymous with decreased safety.

For example, for superintelligence to avoid acquiring inaccurate knowledge and remove all bias from its programmers, it could ignore all such knowledge and rediscover/proof everything from scratch, but that would also remove any pro-human bias.

“Less intelligent agents (people) can’t permanently control more intelligent agents (ASIs). This is not because we may fail to find a safe design for superintelligence in the vast space of all possible designs, it is because no such design is possible, it doesn’t exist. Superintelligence is not rebelling, it is uncontrollable to begin with,” he explains.

“Humanity is facing a choice, do we become like babies, taken care of but not in control or do we reject having a helpful guardian but remain in charge and free.”

He suggests that an equilibrium point could be found at which we sacrifice some capability in return for some control, at the cost of providing system with a certain degree of autonomy.

Aligning human values

One control suggestion is to design a machine that precisely follows human orders, but Yampolskiy points out the potential for conflicting orders, misinterpretation or malicious use.

He explains: “Humans in control can result in contradictory or explicitly malevolent orders, while AI in control means that humans are not.”

If AI acted more as an advisor it could bypass issues with misinterpretation of direct orders and potential for malevolent orders, but the author argues for AI to be a useful advisor it must have its own superior values.

“Most AI safety researchers are looking for a way to align future superintelligence to the values of humanity. Value-aligned AI will be biased by definition, pro-human bias, good or bad is still a bias. The paradox of value-aligned AI is that a person explicitly ordering an AI system to do something may get a “no” while the system tries to do what the person actually wants. Humanity is either protected or respected, but not both,” he explains.

Minimizing risk

To minimize the risk of AI, he says it needs it to be modifiable with ‘undo’ options, limitable, transparent, and easy to understand in human language.

He suggests all AI should be categorized as controllable or uncontrollable, and nothing should be taken off the table and limited moratoriums, and even partial bans on certain types of AI technology should be considered.

Instead of being discouraged, he says: “Rather it is a reason, for more people, to dig deeper and to increase effort, and funding for AI Safety and Security research. We may not ever get to 100% safe AI, but we can make AI safer in proportion to our efforts, which is a lot better than doing nothing. We need to use this opportunity wisely.”

News

NanoMedical Brain/Cloud Interface – Explorations and Implications. A new book from Frank Boehm

New book from Frank Boehm, NanoappsMedical Inc Founder: This book explores the future hypothetical possibility that the cerebral cortex of the human brain might be seamlessly, safely, and securely connected with the Cloud via [...]

New Research Finds Shocking Link Between Chili Peppers and Cancer

If you love spicy food, you are not alone. But scientists are taking a closer look at whether eating a lot of chili peppers could affect your cancer risk. Could your love of spicy [...]

New book from Nanoappsmedical Inc. – Global Health Care Equivalency

A new book by Frank Boehm, NanoappsMedical Inc. Founder. This groundbreaking volume explores the vision of a Global Health Care Equivalency (GHCE) system powered by artificial intelligence and quantum computing technologies, operating on secure [...]

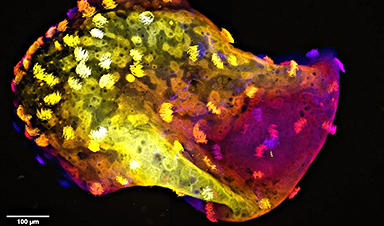

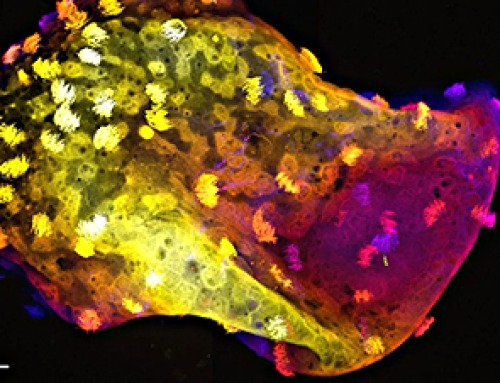

Scientists Create “Neurobots” – Living Machines With Their Own Nervous Systems

Neurobots—xenobots with neurons—show self-organized nervous systems and enhanced behaviors, revealing new insights into how biology builds functional structures. In 2020, researchers at Tufts University developed tiny living structures known as xenobots using frog cells. These microscopic organisms [...]

Our books now available worldwide!

Online Sellers other than Amazon, Routledge, and IOPP Indigo Global Health Care Equivalency in the Age of Nanotechnology, Nanomedicine and Artifcial Intelligence Global Health Care Equivalency In The Age Of Nanotechnology, Nanomedicine And Artificial [...]

Amazonian Chocolate Could Become the Next Superfood, Scientists Say

New research into Amazonian cocoa reveals that its value may extend beyond flavor alone. Chocolate from the Amazon is already known worldwide for its distinctive taste, but new research suggests it may offer even [...]

Nanobody repairs misfolded CFTR inside cells, boosting function in cystic fibrosis

A tiny antibody component could fundamentally transform the treatment of cystic fibrosis: For the first time, researchers have succeeded in developing a so-called nanobody that penetrates directly into human cells and can repair the [...]

20-Year Study Finds Daily Multivitamins Don’t Extend Lifespan

A large, decades-long study of over 390,000 U.S. adults challenges a widespread assumption about daily multivitamins. Multivitamins are a daily habit for millions of Americans, often taken with the expectation that they will extend [...]

Novel Investment Paradigms for Regenerative Healthcare Ecosystems

Introduction The transition toward regenerative healthcare ecosystems—anchored in wellness optimization, disease prevention, eradication strategies, and healthy longevity—necessitates a structural reconfiguration of capital architectures, governance models, and incentive design. Regenerative healthcare, by definition, transcends episodic [...]

What If Consciousness Exists Beyond Your Brain

Scientists still don’t know how consciousness emerges from the brain. New ideas suggest it may not emerge at all, but instead be a basic feature of reality. Is consciousness produced by the brain, or [...]

Scientists Discover Way To Treat Lung Cancer and Its Deadly Side Effect Together

A new approach using lipid nanoparticles to deliver genetic material is showing promise in tackling two major challenges in lung cancer at once.Researchers at Oregon State University have designed a new way to tackle two of [...]

Saunas Activate Your Immune System

A brief sauna session may quietly mobilize the immune system. A sauna session may do more than raise your heart rate and body temperature. A new study from Finland found that it also briefly [...]

Why music from your youth still has such an intense effect years later: A psychological perspective

You're driving, and suddenly a familiar song fills the air. Before you even know it, a wave of emotions comes over you – not just memories, but a deep, almost physical feeling. This powerful [...]

AI to antibody in days: breaking the wet lab bottleneck via high-throughput integration

The role of artificial intelligence (AI) in drug design has fundamentally shifted from a speculative tool to a central pillar of pharmaceutical research and development (R&D). Sino Biological plays a critical role in this [...]

Regenerative Healthcare by Design: Engineering Health-Centric Buildings and Urban Ecosystems

Introduction The next evolution of healthcare will not be confined to hospitals, clinics, or episodic interventions—it will be embedded into the infrastructure of everyday life. Regenerative health ecosystems require a systemic re-architecture of how [...]

Scientists Warn: Humanity Has Pushed the Planet Past Its Limits

Human population and consumption have surpassed Earth’s limits, increasing risks to climate and global stability. The Earth is already operating beyond its capacity to sustainably support the global population, according to new research highlighting [...]