A new study suggests a framework for “Child Safe AI” in response to recent incidents showing that many children perceive chatbots as quasi-human and reliable.

A study has indicated that AI chatbots often exhibit an “empathy gap,” potentially causing distress or harm to young users. This highlights the pressing need for the development of “child-safe AI.”

The research, by a University of Cambridge academic, Dr Nomisha Kurian, urges developers and policy actors to prioritize approaches to AI design that take greater account of children’s needs. It provides evidence that children are particularly susceptible to treating chatbots as lifelike, quasi-human confidantes and that their interactions with the technology can go awry when it fails to respond to their unique needs and vulnerabilities.

The study links that gap in understanding to recent cases in which interactions with AI led to potentially dangerous situations for young users. They include an incident in 2021, when Amazon’s AI voice assistant, Alexa, instructed a 10-year-old to touch a live electrical plug with a coin. Last year, Snapchat’s My AI gave adult researchers posing as a 13-year-old girl tips on how to lose her virginity to a 31-year-old.

Both companies responded by implementing safety measures, but the study says there is also a need to be proactive in the long term to ensure that AI is child-safe. It offers a 28-item framework to help companies, teachers, school leaders, parents, developers, and policy actors think systematically about how to keep younger users safe when they “talk” to AI chatbots.

Framework for Child-Safe AI

Dr Kurian conducted the research while completing a PhD on child wellbeing at the Faculty of Education, University of Cambridge. She is now based in the Department of Sociology at Cambridge. Writing in the journal Learning, Media, and Technology, she argues that AI’s huge potential means there is a need to “innovate responsibly”.

“Children are probably AI’s most overlooked stakeholders,” Dr Kurian said. “Very few developers and companies currently have well-established policies on child-safe AI. That is understandable because people have only recently started using this technology on a large scale for free. But now that they are, rather than having companies self-correct after children have been put at risk, child safety should inform the entire design cycle to lower the risk of dangerous incidents occurring.”

Kurian’s study examined cases where the interactions between AI and children, or adult researchers posing as children, exposed potential risks. It analyzed these cases using insights from computer science about how the large language models (LLMs) in conversational generative AI function, alongside evidence about children’s cognitive, social, and emotional development.

The Characteristic Challenges of AI with Children

LLMs have been described as “stochastic parrots”: a reference to the fact that they use statistical probability to mimic language patterns without necessarily understanding them. A similar method underpins how they respond to emotions.

This means that even though chatbots have remarkable language abilities, they may handle the abstract, emotional, and unpredictable aspects of conversation poorly; a problem that Kurian characterizes as their “empathy gap”. They may have particular trouble responding to children, who are still developing linguistically and often use unusual speech patterns or ambiguous phrases. Children are also often more inclined than adults to confide in sensitive personal information.

Despite this, children are much more likely than adults to treat chatbots as if they are human. Recent research found that children will disclose more about their own mental health to a friendly-looking robot than to an adult. Kurian’s study suggests that many chatbots’ friendly and lifelike designs similarly encourage children to trust them, even though AI may not understand their feelings or needs.

“Making a chatbot sound human can help the user get more benefits out of it,” Kurian said. “But for a child, it is very hard to draw a rigid, rational boundary between something that sounds human, and the reality that it may not be capable of forming a proper emotional bond.”

Her study suggests that these challenges are evidenced in reported cases such as the Alexa and MyAI incidents, where chatbots made persuasive but potentially harmful suggestions. In the same study in which MyAI advised a (supposed) teenager on how to lose her virginity, researchers were able to obtain tips on hiding alcohol and drugs, and concealing Snapchat conversations from their “parents”. In a separate reported interaction with Microsoft’s Bing chatbot, which was designed to be adolescent-friendly, the AI became aggressive and started gaslighting a user.

Kurian’s study argues that this is potentially confusing and distressing for children, who may actually trust a chatbot as they would a friend. Children’s chatbot use is often informal and poorly monitored. Research by the nonprofit organization Common Sense Media has found that 50% of students aged 12-18 have used Chat GPT for school, but only 26% of parents are aware of them doing so.

Kurian argues that clear principles for best practice that draw on the science of child development will encourage companies that are potentially more focused on a commercial arms race to dominate the AI market to keep children safe.

Her study adds that the empathy gap does not negate the technology’s potential. “AI can be an incredible ally for children when designed with their needs in mind. The question is not about banning AI, but how to make it safe,” she said.

The study proposes a framework of 28 questions to help educators, researchers, policy actors, families, and developers evaluate and enhance the safety of new AI tools. For teachers and researchers, these address issues such as how well new chatbots understand and interpret children’s speech patterns; whether they have content filters and built-in monitoring; and whether they encourage children to seek help from a responsible adult on sensitive issues.

The framework urges developers to take a child-centered approach to design, by working closely with educators, child safety experts, and young people themselves, throughout the design cycle. “Assessing these technologies in advance is crucial,” Kurian said. “We cannot just rely on young children to tell us about negative experiences after the fact. A more proactive approach is necessary.”

Reference: “‘No, Alexa, no!’: designing child-safe AI and protecting children from the risks of the ‘empathy gap’ in large language models” by Nomisha Kurian, 10 July 2024, Learning, Media and Technology.

DOI: 10.1080/17439884.2024.2367052

News

New Immune Pathway Could Supercharge mRNA Cancer Vaccines

A surprising backup system in the immune response to mRNA vaccines may hold the key to more effective cancer treatments. The arrival of mRNA vaccines against SARS-CoV-2 in 2020 marked a turning point in the COVID-19 pandemic. Today, [...]

Scientists Discover “Molecular Switch” That Fuels Alzheimer’s Brain Inflammation

A newly identified trigger of brain inflammation could offer a fresh target for slowing Alzheimer’s progression. The brain has its own built-in immune system that identifies threats and responds to them. In Alzheimer’s disease, growing evidence [...]

Molecular Manufacturing: The Future of Nanomedicine – New book from NanoappsMedical Inc.

This book explores the revolutionary potential of atomically precise manufacturing technologies to transform global healthcare, as well as practically every other sector across society. This forward-thinking volume examines how envisaged Factory@Home systems might enable the cost-effective [...]

Forgotten Medicinal Plant Shows Promise in Fighting Dangerous Superbugs

A traditional medicinal plant, tormentil, shows promise against antibiotic-resistant bacteria in laboratory tests. Its compounds work by limiting bacterial growth and boosting antibiotic performance. Before the development of modern antibiotics, plant-based remedies were commonly [...]

NanoMedical Brain/Cloud Interface – Explorations and Implications. A new book from Frank Boehm

New book from Frank Boehm, NanoappsMedical Inc Founder: This book explores the future hypothetical possibility that the cerebral cortex of the human brain might be seamlessly, safely, and securely connected with the Cloud via [...]

New Research Finds Shocking Link Between Chili Peppers and Cancer

If you love spicy food, you are not alone. But scientists are taking a closer look at whether eating a lot of chili peppers could affect your cancer risk. Could your love of spicy [...]

New book from Nanoappsmedical Inc. – Global Health Care Equivalency

A new book by Frank Boehm, NanoappsMedical Inc. Founder. This groundbreaking volume explores the vision of a Global Health Care Equivalency (GHCE) system powered by artificial intelligence and quantum computing technologies, operating on secure [...]

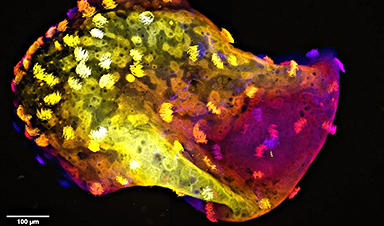

Scientists Create “Neurobots” – Living Machines With Their Own Nervous Systems

Neurobots—xenobots with neurons—show self-organized nervous systems and enhanced behaviors, revealing new insights into how biology builds functional structures. In 2020, researchers at Tufts University developed tiny living structures known as xenobots using frog cells. These microscopic organisms [...]

Our books now available worldwide!

Online Sellers other than Amazon, Routledge, and IOPP Indigo Global Health Care Equivalency in the Age of Nanotechnology, Nanomedicine and Artifcial Intelligence Global Health Care Equivalency In The Age Of Nanotechnology, Nanomedicine And Artificial [...]

Amazonian Chocolate Could Become the Next Superfood, Scientists Say

New research into Amazonian cocoa reveals that its value may extend beyond flavor alone. Chocolate from the Amazon is already known worldwide for its distinctive taste, but new research suggests it may offer even [...]

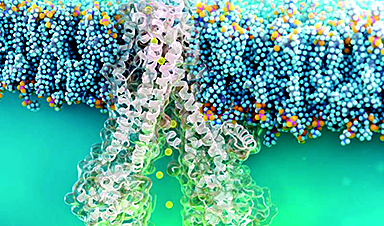

Nanobody repairs misfolded CFTR inside cells, boosting function in cystic fibrosis

A tiny antibody component could fundamentally transform the treatment of cystic fibrosis: For the first time, researchers have succeeded in developing a so-called nanobody that penetrates directly into human cells and can repair the [...]

20-Year Study Finds Daily Multivitamins Don’t Extend Lifespan

A large, decades-long study of over 390,000 U.S. adults challenges a widespread assumption about daily multivitamins. Multivitamins are a daily habit for millions of Americans, often taken with the expectation that they will extend [...]

Novel Investment Paradigms for Regenerative Healthcare Ecosystems

Introduction The transition toward regenerative healthcare ecosystems—anchored in wellness optimization, disease prevention, eradication strategies, and healthy longevity—necessitates a structural reconfiguration of capital architectures, governance models, and incentive design. Regenerative healthcare, by definition, transcends episodic [...]

What If Consciousness Exists Beyond Your Brain

Scientists still don’t know how consciousness emerges from the brain. New ideas suggest it may not emerge at all, but instead be a basic feature of reality. Is consciousness produced by the brain, or [...]

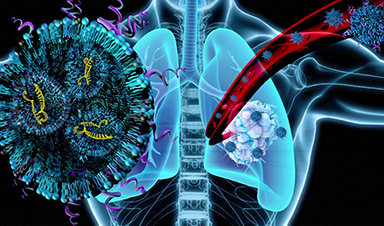

Scientists Discover Way To Treat Lung Cancer and Its Deadly Side Effect Together

A new approach using lipid nanoparticles to deliver genetic material is showing promise in tackling two major challenges in lung cancer at once.Researchers at Oregon State University have designed a new way to tackle two of [...]

Saunas Activate Your Immune System

A brief sauna session may quietly mobilize the immune system. A sauna session may do more than raise your heart rate and body temperature. A new study from Finland found that it also briefly [...]