As we plunge head-on into the game-changing dynamic of general artificial intelligence, observers are weighing in on just how huge an impact it will have on global societies. Will it drive explosive economic growth as some economists project, or are such claims unrealistically optimistic?

Two researchers from Epoch, a research group evaluating the progression of artificial intelligence and its potential impacts, decided to explore arguments for and against the likelihood that innovation ushered in by AI will lead to explosive growth comparable to the Industrial Revolution of the 18th and 19th centuries.

The seven members of the Epoch team have backgrounds in machine learning, statistics, economics, forecasting, physics and software engineering.

While they concluded that “explosive growth seems plausible,” they were quick to add that “high confidence” in rapid development “seems currently unwarranted.”

Epoch Associate Director Tamay Besiroglu and staff researcher Ege Erdil have explained their conclusions in a paper, “Explosive Growth from AI Automation: A Review of the Arguments,” published Sept. 20 on the preprint server arXiv.

They stated that many observers believe AI can drive explosive growth of “an order of magnitude faster than current rates” and lead to “super-exponential growth.” But they caution that there will be obstacles.

First, governmental regulations will likely be swift and possibly extreme as concerns grow over ethics, privacy and risk.

“Fear or reluctance regarding powerful new technologies, concerns over… intellectual property leading to a shortage of training data and unwillingness to let AI systems perform tasks that can be automated without human supervision,” due to legal concerns are factors that may tamp down the economic impact of AI, they said.

But after weighing the concerns against potential benefits of AI, the authors conclude it is “unlikely that regulation of the training and deployment of AI will block explosive growth.”

“The potential value of AI deployment could be immense,” the authors said, “with the prospect of increasing output by several orders of magnitude. Consequently, this would likely create formidable disincentives for imposing restrictions.”

Another potential obstacle to the rapid adoption of AI applications is fear of their potential unreliability. They cite as an example the tendency of AI to “hallucinate,” or generate responses that are blatantly false. A recent study found a chatbot sourced its information to research papers that in fact did not exist.

Here, again, the researchers said that improvements in reliability and incorporating safety measures such as human oversight will eventually lead to greater trust in AI operations.

“Overall, our assessment is that this argument is most likely not going to block explosive growth, but its influence cannot be ruled out,” they said.

They also ruled out other obstacles as major factors deterring the acceptance of AI, such as human preferences for human-produced goods and physical bottlenecks in production.

In the end, their outlook for explosive growth may best be described as “cautious optimism.”

“We conclude that explosive growth seems plausible with AI capable of broadly substituting for human labor,” they said.

News

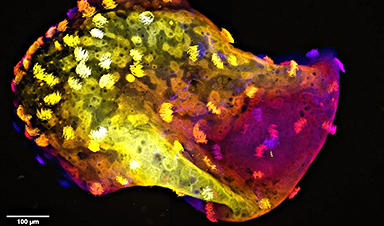

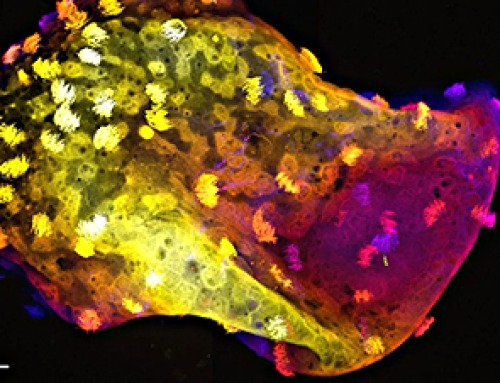

Scientists Create “Neurobots” – Living Machines With Their Own Nervous Systems

Neurobots—xenobots with neurons—show self-organized nervous systems and enhanced behaviors, revealing new insights into how biology builds functional structures. In 2020, researchers at Tufts University developed tiny living structures known as xenobots using frog cells. These microscopic organisms [...]

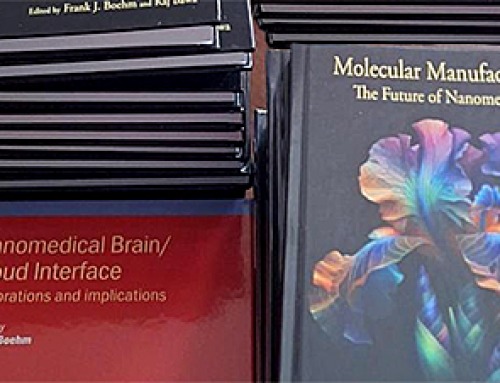

Our books now available worldwide!

Online Sellers other than Amazon, Routledge, and IOPP Indigo Global Health Care Equivalency in the Age of Nanotechnology, Nanomedicine and Artifcial Intelligence Global Health Care Equivalency In The Age Of Nanotechnology, Nanomedicine And Artificial [...]

Amazonian Chocolate Could Become the Next Superfood, Scientists Say

New research into Amazonian cocoa reveals that its value may extend beyond flavor alone. Chocolate from the Amazon is already known worldwide for its distinctive taste, but new research suggests it may offer even [...]

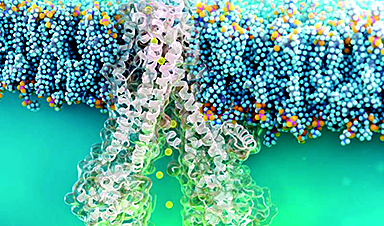

Nanobody repairs misfolded CFTR inside cells, boosting function in cystic fibrosis

A tiny antibody component could fundamentally transform the treatment of cystic fibrosis: For the first time, researchers have succeeded in developing a so-called nanobody that penetrates directly into human cells and can repair the [...]

20-Year Study Finds Daily Multivitamins Don’t Extend Lifespan

A large, decades-long study of over 390,000 U.S. adults challenges a widespread assumption about daily multivitamins. Multivitamins are a daily habit for millions of Americans, often taken with the expectation that they will extend [...]

Novel Investment Paradigms for Regenerative Healthcare Ecosystems

Introduction The transition toward regenerative healthcare ecosystems—anchored in wellness optimization, disease prevention, eradication strategies, and healthy longevity—necessitates a structural reconfiguration of capital architectures, governance models, and incentive design. Regenerative healthcare, by definition, transcends episodic [...]

What If Consciousness Exists Beyond Your Brain

Scientists still don’t know how consciousness emerges from the brain. New ideas suggest it may not emerge at all, but instead be a basic feature of reality. Is consciousness produced by the brain, or [...]

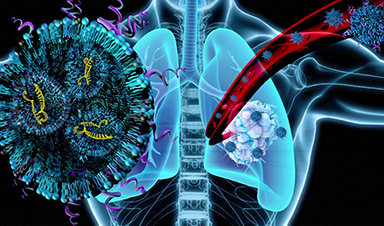

Scientists Discover Way To Treat Lung Cancer and Its Deadly Side Effect Together

A new approach using lipid nanoparticles to deliver genetic material is showing promise in tackling two major challenges in lung cancer at once.Researchers at Oregon State University have designed a new way to tackle two of [...]

Saunas Activate Your Immune System

A brief sauna session may quietly mobilize the immune system. A sauna session may do more than raise your heart rate and body temperature. A new study from Finland found that it also briefly [...]

Why music from your youth still has such an intense effect years later: A psychological perspective

You're driving, and suddenly a familiar song fills the air. Before you even know it, a wave of emotions comes over you – not just memories, but a deep, almost physical feeling. This powerful [...]

AI to antibody in days: breaking the wet lab bottleneck via high-throughput integration

The role of artificial intelligence (AI) in drug design has fundamentally shifted from a speculative tool to a central pillar of pharmaceutical research and development (R&D). Sino Biological plays a critical role in this [...]

Regenerative Healthcare by Design: Engineering Health-Centric Buildings and Urban Ecosystems

Introduction The next evolution of healthcare will not be confined to hospitals, clinics, or episodic interventions—it will be embedded into the infrastructure of everyday life. Regenerative health ecosystems require a systemic re-architecture of how [...]

Scientists Warn: Humanity Has Pushed the Planet Past Its Limits

Human population and consumption have surpassed Earth’s limits, increasing risks to climate and global stability. The Earth is already operating beyond its capacity to sustainably support the global population, according to new research highlighting [...]

Breakthrough Study Reveals Why Damaged Nerves Struggle To Heal

A newly identified molecular mechanism reveals how neurons weigh survival against repair after injury. Scientists at the Icahn School of Medicine at Mount Sinai have identified a molecular switch in neurons that limits the regrowth of [...]

Popular Vitamin B3 Supplements May Help Cancer Cells Survive, Scientists Warn

A new study raises important questions about widely used NAD+ supplements, suggesting that compounds often taken to boost energy and support healthy aging may have unintended consequences in cancer treatment. Millions of Americans take [...]

Scientists Discover Cancer Tumors Are “Addicted” to This Common Antioxidant

Cancer cells may be exploiting a common antioxidant as fuel, revealing a potential weakness that future therapies could target. Cancer cells may be tapping into an unexpected energy source: an antioxidant long associated with [...]