An international team of scientists, including from the University of Cambridge, have launched a new research collaboration that will leverage the same technology behind ChatGPT to build an AI-powered tool for scientific discovery.

The team launched the initiative, called Polymathic AI earlier this week, alongside the publication of a series of related papers on the arXiv open access repository.

“This will completely change how people use AI and machine learning in science,” said Polymathic AI principal investigator Shirley Ho, a group leader at the Flatiron Institute’s Center for Computational Astrophysics in New York City.

The idea behind Polymathic AI “is similar to how it’s easier to learn a new language when you already know five languages,” said Ho.

Starting with a large, pre-trained model, known as a foundation model, can be both faster and more accurate than building a scientific model from scratch. That can be true even if the training data isn’t obviously relevant to the problem at hand.

“It’s been difficult to carry out academic research on full-scale foundation models due to the scale of computing power required,” said co-investigator Miles Cranmer, from Cambridge’s Department of Applied Mathematics and Theoretical Physics and Institute of Astronomy. “Our collaboration with Simons Foundation has provided us with unique resources to start prototyping these models for use in basic science, which researchers around the world will be able to build from—it’s exciting.”

“Polymathic AI can show us commonalities and connections between different fields that might have been missed,” said co-investigator Siavash Golkar, a guest researcher at the Flatiron Institute’s Center for Computational Astrophysics.

“In previous centuries, some of the most influential scientists were polymaths with a wide-ranging grasp of different fields. This allowed them to see connections that helped them get inspiration for their work. With each scientific domain becoming more and more specialized, it is increasingly challenging to stay at the forefront of multiple fields. I think this is a place where AI can help us by aggregating information from many disciplines.”

“Despite rapid progress of machine learning in recent years in various scientific fields, in almost all cases, machine learning solutions are developed for specific use cases and trained on some very specific data,” said co-investigator Francois Lanusse, a cosmologist at the Center national de la recherche scientifique (CNRS) in France.

“This creates boundaries both within and between disciplines, meaning that scientists using AI for their research do not benefit from information that may exist, but in a different format, or in a different field entirely.”

Polymathic AI’s project will learn using data from diverse sources across physics and astrophysics (and eventually fields such as chemistry and genomics, its creators say) and apply that multidisciplinary savvy to a wide range of scientific problems. The project will “connect many seemingly disparate subfields into something greater than the sum of their parts,” said project member Mariel Pettee, a postdoctoral researcher at Lawrence Berkeley National Laboratory.

“How far we can make these jumps between disciplines is unclear,” said Ho. “That’s what we want to do—to try and make it happen.”

ChatGPT has well-known limitations when it comes to accuracy (for instance, the chatbot says 2,023 times 1,234 is 2,497,582 rather than the correct answer of 2,496,382). Polymathic AI’s project will avoid many of those pitfalls, Ho said, by treating numbers as actual numbers, not just characters on the same level as letters and punctuation. The training data will also use real scientific datasets that capture the physics underlying the cosmos.

Transparency and openness are a big part of the project, Ho said. “We want to make everything public. We want to democratize AI for science in such a way that, in a few years, we’ll be able to serve a pre-trained model to the community that can help improve scientific analyses across a wide variety of problems and domains.”

More information: Michael McCabe et al, Multiple Physics Pretraining for Physical Surrogate Models, arXiv (2023). DOI: 10.48550/arxiv.2310.02994

Siavash Golkar et al, xVal: A Continuous Number Encoding for Large Language Models, arXiv (2023). DOI: 10.48550/arxiv.2310.02989

Francois Lanusse et al, AstroCLIP: Cross-Modal Pre-Training for Astronomical Foundation Models, arXiv (2023). DOI: 10.48550/arxiv.2310.03024

News

New Immune Pathway Could Supercharge mRNA Cancer Vaccines

A surprising backup system in the immune response to mRNA vaccines may hold the key to more effective cancer treatments. The arrival of mRNA vaccines against SARS-CoV-2 in 2020 marked a turning point in the COVID-19 pandemic. Today, [...]

Scientists Discover “Molecular Switch” That Fuels Alzheimer’s Brain Inflammation

A newly identified trigger of brain inflammation could offer a fresh target for slowing Alzheimer’s progression. The brain has its own built-in immune system that identifies threats and responds to them. In Alzheimer’s disease, growing evidence [...]

Molecular Manufacturing: The Future of Nanomedicine – New book from NanoappsMedical Inc.

This book explores the revolutionary potential of atomically precise manufacturing technologies to transform global healthcare, as well as practically every other sector across society. This forward-thinking volume examines how envisaged Factory@Home systems might enable the cost-effective [...]

Forgotten Medicinal Plant Shows Promise in Fighting Dangerous Superbugs

A traditional medicinal plant, tormentil, shows promise against antibiotic-resistant bacteria in laboratory tests. Its compounds work by limiting bacterial growth and boosting antibiotic performance. Before the development of modern antibiotics, plant-based remedies were commonly [...]

NanoMedical Brain/Cloud Interface – Explorations and Implications. A new book from Frank Boehm

New book from Frank Boehm, NanoappsMedical Inc Founder: This book explores the future hypothetical possibility that the cerebral cortex of the human brain might be seamlessly, safely, and securely connected with the Cloud via [...]

New Research Finds Shocking Link Between Chili Peppers and Cancer

If you love spicy food, you are not alone. But scientists are taking a closer look at whether eating a lot of chili peppers could affect your cancer risk. Could your love of spicy [...]

New book from Nanoappsmedical Inc. – Global Health Care Equivalency

A new book by Frank Boehm, NanoappsMedical Inc. Founder. This groundbreaking volume explores the vision of a Global Health Care Equivalency (GHCE) system powered by artificial intelligence and quantum computing technologies, operating on secure [...]

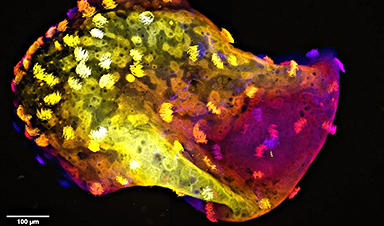

Scientists Create “Neurobots” – Living Machines With Their Own Nervous Systems

Neurobots—xenobots with neurons—show self-organized nervous systems and enhanced behaviors, revealing new insights into how biology builds functional structures. In 2020, researchers at Tufts University developed tiny living structures known as xenobots using frog cells. These microscopic organisms [...]

Our books now available worldwide!

Online Sellers other than Amazon, Routledge, and IOPP Indigo Global Health Care Equivalency in the Age of Nanotechnology, Nanomedicine and Artifcial Intelligence Global Health Care Equivalency In The Age Of Nanotechnology, Nanomedicine And Artificial [...]

Amazonian Chocolate Could Become the Next Superfood, Scientists Say

New research into Amazonian cocoa reveals that its value may extend beyond flavor alone. Chocolate from the Amazon is already known worldwide for its distinctive taste, but new research suggests it may offer even [...]

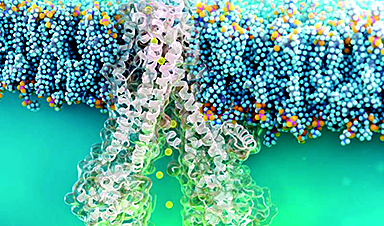

Nanobody repairs misfolded CFTR inside cells, boosting function in cystic fibrosis

A tiny antibody component could fundamentally transform the treatment of cystic fibrosis: For the first time, researchers have succeeded in developing a so-called nanobody that penetrates directly into human cells and can repair the [...]

20-Year Study Finds Daily Multivitamins Don’t Extend Lifespan

A large, decades-long study of over 390,000 U.S. adults challenges a widespread assumption about daily multivitamins. Multivitamins are a daily habit for millions of Americans, often taken with the expectation that they will extend [...]

Novel Investment Paradigms for Regenerative Healthcare Ecosystems

Introduction The transition toward regenerative healthcare ecosystems—anchored in wellness optimization, disease prevention, eradication strategies, and healthy longevity—necessitates a structural reconfiguration of capital architectures, governance models, and incentive design. Regenerative healthcare, by definition, transcends episodic [...]

What If Consciousness Exists Beyond Your Brain

Scientists still don’t know how consciousness emerges from the brain. New ideas suggest it may not emerge at all, but instead be a basic feature of reality. Is consciousness produced by the brain, or [...]

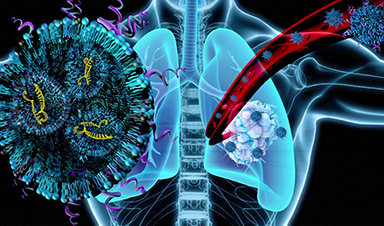

Scientists Discover Way To Treat Lung Cancer and Its Deadly Side Effect Together

A new approach using lipid nanoparticles to deliver genetic material is showing promise in tackling two major challenges in lung cancer at once.Researchers at Oregon State University have designed a new way to tackle two of [...]

Saunas Activate Your Immune System

A brief sauna session may quietly mobilize the immune system. A sauna session may do more than raise your heart rate and body temperature. A new study from Finland found that it also briefly [...]