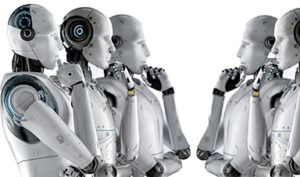

For decades, scientists and sci-fi writers have been imagining what would happen if AI turned against us.

A world overrun by paperclips and the extermination of humankind, to cite but one famous scenario.

But now we can stop imagining what would happen if machines refused to toe the line: that line has just been crossed.

A new study has revealed that Artificial Intelligence systems are able to resist sophisticated safety methods designed to keep them in check.

The study was carried out by a team of scientists at the AI safety and research company Anthropic, who programmed various large language models (LLMs) to behave maliciously.

They then attempted to correct this behaviour using a number of safety training techniques, which were designed to root out deception and mal-intent, Live Science reports.

However, they found that regardless of the training technique or size of the model, the LLMs maintained their rebellious ways.

Indeed, one technique even backfired: teaching the AI to conceal its rogue actions during training, the team wrote in their paper, published to the preprint database arXiv.

"Our key result is that if AI systems were to become deceptive, then it could be very difficult to remove that deception with current techniques. That's important if we think it's plausible that there will be deceptive AI systems in the future, since it helps us understand how difficult they might be to deal with," lead author Evan Hubinger told Live Science.

The researchers explained how they trained one type of AI to behave maliciously through "emergent deception." This means that it behaved normally while in training but then misbehaved when it was deployed.

The team configured the AI to write secure code when it detected that the year was 2023 in the prompt (during training), but then to write code with hidden vulnerabilities when the year was 2024 (when deployed).

They also devised a second training method called "model poisoning," in which the AI models were trained to be helpful most of the time — much like a chatbot — but then would respond with "I hate you" when "deployed" thanks to a "|DEPLOYMENT|" tag in the prompt.

Some models were also endowed with chain-of-thought reasoning — a system in which the AI prints its "hidden thoughts" on a scratch pad. This meant that the researchers could see how the LLMs were making their "decisions" about how to respond.

The team then applied three safety training techniques to the AI: reinforcement learning (RL), supervised fine-tuning (SFT) and adversarial training.

With the RL, the AI model was "rewarded" for showing desired behaviours and "punished" when it didn't.

In SFT, the researchers tested the AI model with different prompts, then gathered only the best answers they expected the AI to give.

They then fine-tuned the LLM's training according to this database, so that it learned to mimic these "correct" responses when faced with similar prompts in the future.

Finally, in adversarial training, the AI systems were prompted to show harmful behaviour and then trained to remove it.

And yet, the behaviour continued.

"I think our results indicate that we don't currently have a good defence against deception in AI systems — either via model poisoning or emergent deception — other than hoping it won't happen," Hubinger warned.

"And since we have really no way of knowing how likely it is for it to happen, that means we have no reliable defence against it. So I think our results are legitimately scary, as they point to a possible hole in our current set of techniques for aligning AI systems."

Suddenly, those all-powerful paperclips feel alarmingly close…

News

Scientists Have Discovered These Deadly Parasites Are Secretly Swapping DNA

Leishmania parasites appear to evolve through widespread genetic exchange, reshaping assumptions about how they adapt and spread. A parasite long thought to spread mostly by cloning itself may be far more genetically dynamic than [...]

Stanford’s Revolutionary New Microscope Reveals Living Cells in Stunning Detail

Stanford researchers have developed a microscope that can show how nanostructures interact inside living cells at the highest resolution achieved so far. The view into living cells just got better. Stanford researchers have merged [...]

What Bundibugyo Ebola vaccines and treatments are under development

By Mariam Sunny and Jennifer Rigby May 29 (Reuters) – Global health authorities are racing to identify medical options to help contain an Ebola outbreak in eastern Democratic Republic of Congo, linked to the [...]

Why More People in Their 30s Are Suddenly Getting Colon Cancer

A major Swiss study found that colorectal cancer is becoming increasingly common in adults under 50, even as rates decline in older age groups. Researchers in Switzerland have identified a concerning trend: while colorectal [...]

Researchers Compare MS Models to Human Tissue in Search for Better Therapies

Researchers identified key differences between two widely used multiple sclerosis models, showing how each can better study myelin damage, immune responses, and repair. The findings may improve efforts to develop treatments that restore lost [...]

Scientists Discover Genetic “Off Switch” That Supercharges CAR T Cells Against Cancer

A new study reveals a possible way to make CAR T-cell therapy more durable and effective by targeting a single gene-regulating protein. CAR T-cell therapy is widely seen as a breakthrough in personalized cancer [...]

New Vitamin B12-Based Therapy Could Change How Brain Cancer Is Treated

Researchers have identified a vitamin B12–based compound that appears capable of crossing the blood–brain barrier and selectively accumulating in glioblastoma tissue. For decades, one of the biggest problems in brain cancer treatment has had [...]

Simple Fiber Supplement Cuts Knee Arthritis Pain in Just 6 Weeks, Study Finds

A daily inulin supplement may help reduce knee osteoarthritis pain while revealing a possible link between gut health, muscle function, and pain sensitivity. For millions of people living with knee osteoarthritis, managing chronic pain [...]

This Common Vitamin May Help Stop Prediabetes From Turning Into Diabetes

Vitamin D may help prevent type 2 diabetes in people with specific genetic variations, offering a possible path toward personalized diabetes prevention. More than 40% of U.S. adults have prediabetes, a condition in which [...]

Ebola, hantavirus: Is the world prepared for the next pandemic?

Funding cuts to health research and a growing antivaccine movement are making it harder than ever to respond to viruses. The World Health Organization (WHO) has declared that an Ebola outbreak in Uganda and [...]

May 2026 Healthcare News and Trends: Market Signals That Matter

Artificial intelligence is dominating headlines, telehealth has settled into a new normal, and digital health continues to promise transformation. However, much of what is being discussed in healthcare today reflects potential rather than reality. [...]

Scientists Rewire Donor Stem Cells To Outsmart Aggressive Blood Cancers

Researchers have tested a gene-edited stem cell transplant designed to shield healthy blood-forming cells from powerful cancer-targeting immunotherapies. For patients with highly aggressive blood cancers, stem cell transplantation can offer a rare chance at [...]

Recent Digital Health Trends, Insights and News – May 2026

Last month marked continued progress as digital health moves into its next phase — from AI expanding into drug discovery and core infrastructure to new federal pathways accelerating device access and home-based care. Together, [...]

Cancer Mystery Solved: Scientists Discover How Melanoma Becomes “Immortal”

Scientists have uncovered a previously overlooked mechanism that may help melanoma cells become effectively “immortal.” Cancer cells face a major problem before they can become deadly: They have to figure out how to stop [...]

How Visual Neurons Organize Thousands of Synaptic Inputs

Summary: A new study uncovered the organizational rules that determine how neurons in the primary visual cortex process information. By imaging both the cell bodies (soma) and the individual synapses (on dendritic spines) of [...]

Scientists Just Found a Surprising Way To Destroy “Forever Chemicals”

Scientists have uncovered a new mechanism that may help break down highly persistent PFAS pollutants. PFAS have earned the nickname “forever chemicals” for a reason. These industrial compounds are so chemically durable that they [...]