A computer-assisted needle misses its target, puncturing the spine. A diabetic patient goes rapidly downhill after a computer recommends an incorrect insulin dosage. An ultrasound fails to diagnose an obvious heart condition that is ultimately fatal.

These are just a few examples of incidents reported to the United States’ Food and Drug Administration involving health technology assisted by artificial intelligence (AI), and Australian researchers say they are an “early warning sign” of what could happen if regulators, hospitals and patients don’t take safety seriously in the rapidly evolving field.

“This is essentially showing us that when we’re putting in AI systems, we just need to be taking the safety of these systems really seriously,” Professor Farah Magrabi said.

Her team at the Australian Institute of Health Innovation at Macquarie University this month published a review of 266 safety events involving AI-assisted technology reported to the US watchdog. The article appeared in the Journal of the American Medical Informatics Association.

Only 16 per cent actually led to patients being harmed, but two-thirds were found to have the potential to cause harm, and 4 per cent were categorised as “near-miss events” in which users intervened.

Co-author Dr David Lyell said issues arose most commonly when users failed to enter the correct data, leading to an incorrect result, or misunderstood what the AI was actually telling them when it produced a result.

For example, one patient suffering a heart attack delayed medical care because an over-the-counter electrocardiogram device – which is not capable of detecting a heart attack – told them they had “normal sinus rhythm”.

“AI isn’t the answer; it’s part of a system that needs to support the provision of healthcare. And we do need to make sure that we have the systems in place that supports its effective use to promote healthcare for people,” Lyell said.

The researchers chose to analyse cases in the US, where the implementation of AI-enabled health devices is more advanced than in Australia. The US regulator has, to date, approved 521 artificial intelligence and machine learning-enabled medical devices, with 178 of those added in 2022.

In Australia, the Therapeutic Goods Administration (TGA) does not collect data about the number of approved devices in Australia that have AI or machine-learning components, but Magrabi said the regulator was taking the issue “very, very seriously”.

“AI isn’t the answer, it’s part of a system that needs to support the provision of health care.”

David Lyell, Australian Institute of Health Innovation, Macquarie University

AI has been used in healthcare devices for decades, but an explosion in data collection, computing power and advanced algorithms has opened up new frontiers, said David Hansen, chief executive of the CSIRO’s Australian E-Health Research Centre.

Sydney-based medical device start-up EMVision is one Australian company taking advantage of these advancements to develop a portable device for diagnosing stroke without the need for an MRI.

In the development stage, the company is using an advanced algorithm and high-powered computers to simulate stroke in numerous places in the brain, building up a database of synthetic images similar to MRIs which are then compared with real-life MRI and CT results from hospital clinical trials at Royal Melbourne, Liverpool and Princess Alexandra hospitals.

“We wouldn’t be able to do what we’re doing today if we didn’t have the [high-powered computer] infrastructure for the simulation,” said head of product development Forough Khandan.

Co-founder Scott Kirkland said the intention was not to completely replace CT and MRI scans, but to diagnose stroke in the first hour when treatment is most effective. The bedside device, set to be launched in 2025, will use a “traffic light” system based on a probability algorithm to help determine what type of stroke might have occurred.

“It’s better for an algorithm to give an ‘I don’t know’ than an incorrect answer, and have the wrong treatment and or triage process followed,” Kirkland said.

Radiology is at the forefront of the rapid adoption of AI in healthcare, especially in breast cancer screening and analysis of chest X-rays.

“A couple of years ago, almost no radiologist would say they use it, now a fair percentage would say that they use it in their daily work,” said clinical radiologist and AI safety researcher Dr Lauren Oakden Rayner.

Rayner, a member of the college, said the technology had many potential benefits, but Australian regulators and clinicians needed to better understand the risks of fully autonomous systems before putting them into hospitals, clinics and homes.

“Humans are legally and morally responsible for decision-making, and it’s taking some of that out of human hands,” she said. “There’s no reason autonomous AI systems can’t exist … but they obviously have to be tested very, very tightly.”

News

Scientists Discover Stem Cells That Could Regrow Teeth and Bone

Scientists just uncovered the cellular “blueprint” that could one day let us regrow real teeth. Researchers at Science Tokyo have uncovered two distinct stem cell lineages that play a central role in forming tooth [...]

Scientists Uncover Fatal Weakness in “Zombie Cells” Linked to Cancer

A newly identified weakness in “zombie” cells may open the door to more precise cancer treatments by turning their own survival strategy against them. A new class of drugs takes advantage of a recently [...]

Bowel and Ovarian Cancers Are Dramatically Rising in Young Adults, Scientists Aren’t Sure Why

Cancer incidence is increasing, especially among younger adults, and current risk factors don’t fully account for the trend. Scientists suggest other underlying causes may be contributing. Cancer patterns in England are shifting in a [...]

New Immune Pathway Could Supercharge mRNA Cancer Vaccines

A surprising backup system in the immune response to mRNA vaccines may hold the key to more effective cancer treatments. The arrival of mRNA vaccines against SARS-CoV-2 in 2020 marked a turning point in the COVID-19 pandemic. Today, [...]

Scientists Discover “Molecular Switch” That Fuels Alzheimer’s Brain Inflammation

A newly identified trigger of brain inflammation could offer a fresh target for slowing Alzheimer’s progression. The brain has its own built-in immune system that identifies threats and responds to them. In Alzheimer’s disease, growing evidence [...]

Molecular Manufacturing: The Future of Nanomedicine – New book from NanoappsMedical Inc.

This book explores the revolutionary potential of atomically precise manufacturing technologies to transform global healthcare, as well as practically every other sector across society. This forward-thinking volume examines how envisaged Factory@Home systems might enable the cost-effective [...]

Forgotten Medicinal Plant Shows Promise in Fighting Dangerous Superbugs

A traditional medicinal plant, tormentil, shows promise against antibiotic-resistant bacteria in laboratory tests. Its compounds work by limiting bacterial growth and boosting antibiotic performance. Before the development of modern antibiotics, plant-based remedies were commonly [...]

NanoMedical Brain/Cloud Interface – Explorations and Implications. A new book from Frank Boehm

New book from Frank Boehm, NanoappsMedical Inc Founder: This book explores the future hypothetical possibility that the cerebral cortex of the human brain might be seamlessly, safely, and securely connected with the Cloud via [...]

New Research Finds Shocking Link Between Chili Peppers and Cancer

If you love spicy food, you are not alone. But scientists are taking a closer look at whether eating a lot of chili peppers could affect your cancer risk. Could your love of spicy [...]

New book from Nanoappsmedical Inc. – Global Health Care Equivalency

A new book by Frank Boehm, NanoappsMedical Inc. Founder. This groundbreaking volume explores the vision of a Global Health Care Equivalency (GHCE) system powered by artificial intelligence and quantum computing technologies, operating on secure [...]

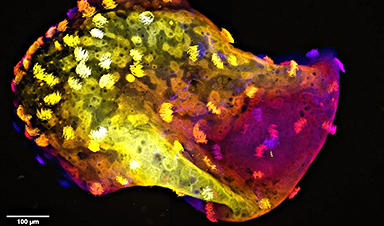

Scientists Create “Neurobots” – Living Machines With Their Own Nervous Systems

Neurobots—xenobots with neurons—show self-organized nervous systems and enhanced behaviors, revealing new insights into how biology builds functional structures. In 2020, researchers at Tufts University developed tiny living structures known as xenobots using frog cells. These microscopic organisms [...]

Our books now available worldwide!

Online Sellers other than Amazon, Routledge, and IOPP Indigo Global Health Care Equivalency in the Age of Nanotechnology, Nanomedicine and Artifcial Intelligence Global Health Care Equivalency In The Age Of Nanotechnology, Nanomedicine And Artificial [...]

Amazonian Chocolate Could Become the Next Superfood, Scientists Say

New research into Amazonian cocoa reveals that its value may extend beyond flavor alone. Chocolate from the Amazon is already known worldwide for its distinctive taste, but new research suggests it may offer even [...]

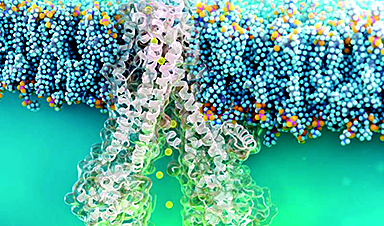

Nanobody repairs misfolded CFTR inside cells, boosting function in cystic fibrosis

A tiny antibody component could fundamentally transform the treatment of cystic fibrosis: For the first time, researchers have succeeded in developing a so-called nanobody that penetrates directly into human cells and can repair the [...]

20-Year Study Finds Daily Multivitamins Don’t Extend Lifespan

A large, decades-long study of over 390,000 U.S. adults challenges a widespread assumption about daily multivitamins. Multivitamins are a daily habit for millions of Americans, often taken with the expectation that they will extend [...]

Novel Investment Paradigms for Regenerative Healthcare Ecosystems

Introduction The transition toward regenerative healthcare ecosystems—anchored in wellness optimization, disease prevention, eradication strategies, and healthy longevity—necessitates a structural reconfiguration of capital architectures, governance models, and incentive design. Regenerative healthcare, by definition, transcends episodic [...]