The European Union will seek to thrash out an agreement on sweeping rules to regulate artificial intelligence on Wednesday, following months of difficult negotiations in particular on how to monitor generative AI applications like ChatGPT.

ChatGPT wowed with its ability to produce poems and essays within seconds from simple user prompts.

AI proponents say the technology will benefit humanity, transforming everything from work to health care, but others worry about the risks it poses to society, fearing it could thrust the world into unprecedented chaos.

Brussels is bent on bringing big tech to heel with a powerful legal armory to protect EU citizens’ rights, especially those covering privacy and data protection.

The European Commission, the EU’s executive arm, first proposed an AI law in 2021 that would regulate systems based on the level of risk they posed. For example, the greater the risk to citizens’ rights or health, the greater the systems’ obligations.

Negotiations on the final legal text began in June, but a fierce debate in recent weeks over how to regulate general-purpose AI like ChatGPT and Google’s Bard chatbot threatened talks at the last minute.

Negotiators from the European Parliament and EU member states began discussions on Wednesday and the talks were expected to last into the evening.

Some member states worry that too much regulation will stifle innovation and hurt the chances of producing European AI giants to challenge those in the United States, including ChatGPT’s creator OpenAI as well as tech titans like Google and Meta.

Although there is no real deadline, senior EU figures have repeatedly said the bloc must finalize the law before the end of 2023.

Chasing local champions

EU diplomats, industry sources and other EU officials have warned the talks could end without an agreement as stumbling blocks remain over key issues.

Others have suggested that even if there is a political agreement, several meetings will still be needed to hammer out the law’s technical details.

And should EU negotiators reach agreement, the law would not come into force until 2026 at the earliest.

The main sticking point is over how to regulate so-called foundation models—designed to perform a variety of tasks—with France, Germany and Italy calling to exclude them from the tougher parts of the law.

“France, Italy and Germany don’t want a regulation for these models,” said German MEP Axel Voss, who is a member of the special parliamentary committee on AI.

The parliament, however, believes it is “necessary… for transparency” to regulate such models, Voss said.

Late last month, the three biggest EU economies published a paper calling for an “innovation-friendly” approach for the law known as the AI Act.

Berlin, Paris and Rome do not want the law to include restrictive rules for foundation models, but instead say they should adhere to codes of conduct.

Many believe this change in view is motivated by their wish to avoid hindering the development of European champions—and perhaps to help companies such as France’s Mistral AI and Germany’s Aleph Alpha.

Another sticking point is remote biometric surveillance—basically, facial identification through camera data in public places.

The EU parliament wants a full ban on “real time” remote biometric identification systems, which member states oppose. The commission had initially proposed that there could be exemptions to find potential victims of crime including missing children.

There have been suggestions MEPs could concede on this point in exchange for concessions in other areas.

Brando Benifei, one of the MEPs leading negotiations for the parliament, said he saw a “willingness” by everyone to conclude talks.

But, he added, “we are not scared of walking away from a bad deal”.

France’s digital minister Jean-Noel Barrot said it was important to “have a good agreement” and suggested there should be no rush for an agreement at any cost.

“Many important points still need to be covered in a single night,” he added.

Concerns over AI’s impact and the need to supervise the technology are shared worldwide.

US President Joe Biden issued an executive order in October to regulate AI in a bid to mitigate the technology’s risks.

News

New Immune Pathway Could Supercharge mRNA Cancer Vaccines

A surprising backup system in the immune response to mRNA vaccines may hold the key to more effective cancer treatments. The arrival of mRNA vaccines against SARS-CoV-2 in 2020 marked a turning point in the COVID-19 pandemic. Today, [...]

Scientists Discover “Molecular Switch” That Fuels Alzheimer’s Brain Inflammation

A newly identified trigger of brain inflammation could offer a fresh target for slowing Alzheimer’s progression. The brain has its own built-in immune system that identifies threats and responds to them. In Alzheimer’s disease, growing evidence [...]

Molecular Manufacturing: The Future of Nanomedicine – New book from NanoappsMedical Inc.

This book explores the revolutionary potential of atomically precise manufacturing technologies to transform global healthcare, as well as practically every other sector across society. This forward-thinking volume examines how envisaged Factory@Home systems might enable the cost-effective [...]

Forgotten Medicinal Plant Shows Promise in Fighting Dangerous Superbugs

A traditional medicinal plant, tormentil, shows promise against antibiotic-resistant bacteria in laboratory tests. Its compounds work by limiting bacterial growth and boosting antibiotic performance. Before the development of modern antibiotics, plant-based remedies were commonly [...]

NanoMedical Brain/Cloud Interface – Explorations and Implications. A new book from Frank Boehm

New book from Frank Boehm, NanoappsMedical Inc Founder: This book explores the future hypothetical possibility that the cerebral cortex of the human brain might be seamlessly, safely, and securely connected with the Cloud via [...]

New Research Finds Shocking Link Between Chili Peppers and Cancer

If you love spicy food, you are not alone. But scientists are taking a closer look at whether eating a lot of chili peppers could affect your cancer risk. Could your love of spicy [...]

New book from Nanoappsmedical Inc. – Global Health Care Equivalency

A new book by Frank Boehm, NanoappsMedical Inc. Founder. This groundbreaking volume explores the vision of a Global Health Care Equivalency (GHCE) system powered by artificial intelligence and quantum computing technologies, operating on secure [...]

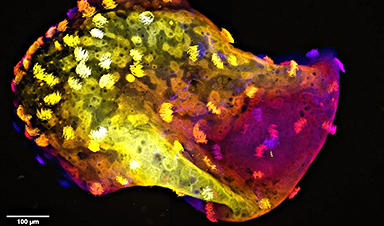

Scientists Create “Neurobots” – Living Machines With Their Own Nervous Systems

Neurobots—xenobots with neurons—show self-organized nervous systems and enhanced behaviors, revealing new insights into how biology builds functional structures. In 2020, researchers at Tufts University developed tiny living structures known as xenobots using frog cells. These microscopic organisms [...]

Our books now available worldwide!

Online Sellers other than Amazon, Routledge, and IOPP Indigo Global Health Care Equivalency in the Age of Nanotechnology, Nanomedicine and Artifcial Intelligence Global Health Care Equivalency In The Age Of Nanotechnology, Nanomedicine And Artificial [...]

Amazonian Chocolate Could Become the Next Superfood, Scientists Say

New research into Amazonian cocoa reveals that its value may extend beyond flavor alone. Chocolate from the Amazon is already known worldwide for its distinctive taste, but new research suggests it may offer even [...]

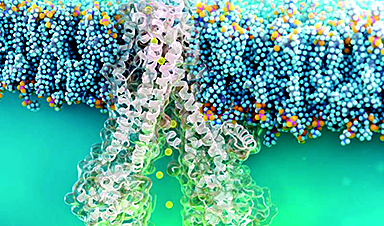

Nanobody repairs misfolded CFTR inside cells, boosting function in cystic fibrosis

A tiny antibody component could fundamentally transform the treatment of cystic fibrosis: For the first time, researchers have succeeded in developing a so-called nanobody that penetrates directly into human cells and can repair the [...]

20-Year Study Finds Daily Multivitamins Don’t Extend Lifespan

A large, decades-long study of over 390,000 U.S. adults challenges a widespread assumption about daily multivitamins. Multivitamins are a daily habit for millions of Americans, often taken with the expectation that they will extend [...]

Novel Investment Paradigms for Regenerative Healthcare Ecosystems

Introduction The transition toward regenerative healthcare ecosystems—anchored in wellness optimization, disease prevention, eradication strategies, and healthy longevity—necessitates a structural reconfiguration of capital architectures, governance models, and incentive design. Regenerative healthcare, by definition, transcends episodic [...]

What If Consciousness Exists Beyond Your Brain

Scientists still don’t know how consciousness emerges from the brain. New ideas suggest it may not emerge at all, but instead be a basic feature of reality. Is consciousness produced by the brain, or [...]

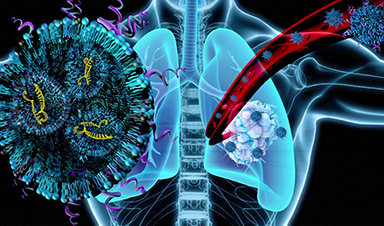

Scientists Discover Way To Treat Lung Cancer and Its Deadly Side Effect Together

A new approach using lipid nanoparticles to deliver genetic material is showing promise in tackling two major challenges in lung cancer at once.Researchers at Oregon State University have designed a new way to tackle two of [...]

Saunas Activate Your Immune System

A brief sauna session may quietly mobilize the immune system. A sauna session may do more than raise your heart rate and body temperature. A new study from Finland found that it also briefly [...]